A showcase of projects that used Apparance in the production, prototyping, or evaluation of their ideas.

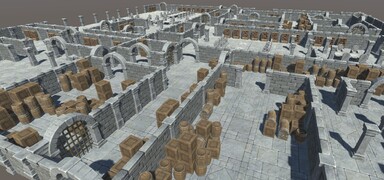

Gloomhaven is a tactical combat board game, with players working together to vanquish menacing dungeons and forgotten ruins. It involves card play, strategy, and cooperation. The action is played out on a series of hex-based map-tiles interconnected to form weaving dungeons with open spaces and narrow corridors. Each scenario has a particular room layout, rules, and risks and rewards for the players.

It was a huge undertaking to recreate the whole game with nearly 100 bespoke campaign scenarios, dozens of characters, hundreds of cards, and thousands of rules to adhere to. To build it all in one go was too much of a developmental risk and the team at Flaming Fowl decided to approach it from a different direction.

Starting with a sub-set of the whole game they planned on leveraging various procedural techniques to build an “adventure mode” version of the game which mixed together the available elements into randomised scenario encounters set across a generated map. This approach meant that they could enter early access with a much smaller and more manageable set of assets and features yet still bringing the true Gloomhaven gameplay experience from the start. The dynamic nature of parameterised procedural systems was ideal for coping with the unknowns of populating an endless variety of maptile configurations and interconnections that just wouldn’t be possible by hand.

The team at Flaming Fowl decided Apparance was a good fit for the complex and dynamic nature of what they were trying to achieve.

Technical Details

The pipeline for building the maps in Gloomhaven is a hybrid of configuration data, code, data driven procedures, and artist created content palettes, each chosen according to their strengths and the constraints and requirements of each part of the process. The scenario design, map layout, and maptile analysis is all performed in code, written by the gameplay team according to the games requirements for mission structure and gameplay rules. After the maptile analysis stage these layouts were turned into the actual placed meshes and prefabs that form the environment the game plays out in. Apparance is responsible for turning the room and wall positions and their styling into placed scenery prefabs.

Gardens

A project working with Salix Games to build a procedural walled garden prototype. Rich environments with interesting ground structure, barriers, features, pools, flowerbeds, foliage, and trees were created to match the style and mood they required. Built on Unreal 5, leveraging Nanite for detailed meshes and Lumen for soft lighting and ambient occlusion, some beautiful scenery was realised.

Later experiments with dynamic procedural objects proved successful as live foliage growth effects were created for a 'bindweed' style of game element.

See more shots and information on Twitter.

Technical Details

Large gameplay areas were also created (not shown) where each play of the game produced a new environment with new challenges and experiences. A simple description languge was parsed by the procedures to drive high-level layout and configuration of the spaces allow rapid adjustment and balancing.

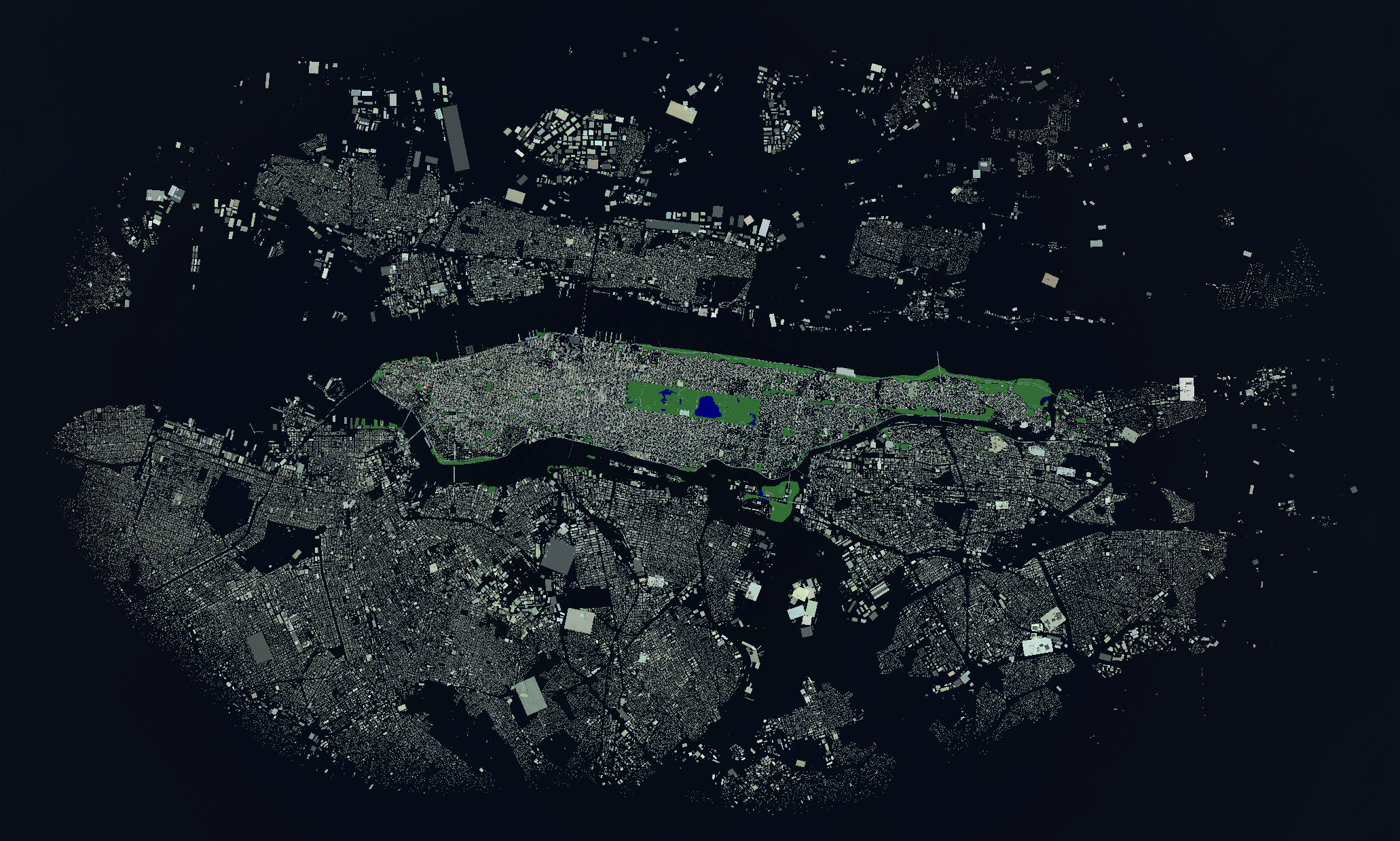

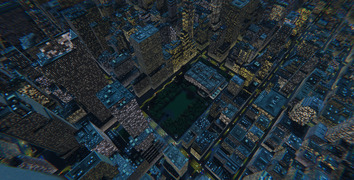

New York

Building a large rendition of New York from Open Streetmap data. A commissioned investigation that resulted in some beautiful screenshots. An area of the city approximately 25km x 40km was brought to life in glorious detail.

Technical Details

Approximately 1.5GB of source data (XML) from Open Streetmap was processed down to 30MB of binary data and used to drive the city generation procedures. The city was broken up into 1km blocks and several layers for generation. All buildings, ground, trees, roads, and rooftop detail is generated geometry mostly combined into shared geometry buffers (several shared uber-shaders). The whole city takes about 30s to fully generate, including the fully populated rooftop detail (which is usually only generated near the camera).

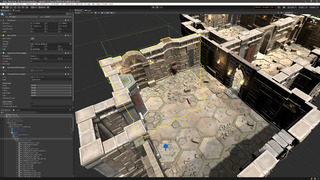

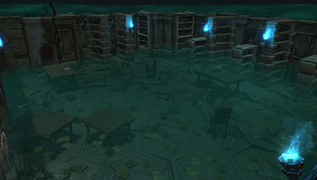

Dungeons

Constructing large sprawling dungeons during the development of the Apparance Unity plugin. This served as quite a thorough test case whilst developing and improving the Unity plugin. It's also a quite a deep-dive into certain approaches of dungeon generation.

Technical Details

The fundamental principals employed here are "divide and conquer", starting with the overall dungeon footprint and where you want the entrance/exit to be you start chopping the space up. Each time you consider when the split up parts are small enough to move on to the next type of space; dungeon -> room -> area -> objects. An important part of this process is to ensure you aren't changing the connectivity in a way that breaks the gameplay capability of the dungeon, i.e. whenever you split, add in a connecting door (or more if it's a long wall).

As you move from dungeon to rooms you can branch to different room types if you want, each having its own sub-procedure hierarchy to implement it. The dungeon regions are split ensuring not to cut doorways in half, the room areas are similarly chosen as to not interfere with doors. Finally when your area is small enough you can place objects, again, driven by room type, and whether they are along a wall, in a corner, or in open space.

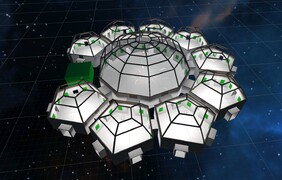

Multiverse

Proof-of-concept for building of a flexible and easily authored virtual exhibition space driven from simple room definition files.

Technical Details

The Multiverse team wanted to explore the possibility of procedurally generating room layout, geometry, interconnection, and decoration using a text description language written in XML. This meant new layouts and adjustments to existing layouts could be made very simply and all rooms would update. There were elements for walls, doors, floor, etc, as well as descriptive language for how display spaces (floor and wall) should be broken up, and then what populated them.

Have a look at the Archive Page if you'd like to see some of the older demos, tests, and experimental screenshots collected throughout the development of Apparance.

in a generated city.jpg)

.jpg)

.jpg)

.jpg)